In February 2024, a finance worker in Hong Kong joined what appeared to be a routine video call with colleagues. The chief financial officer was on screen, along with several team members. Instructions were clear: transfer $25 million. The employee complied. Every person on that call was a deepfake.

The scam worked not because the technology was flawless, but because it didn't need to be. "What mattered was timing and context," explains Santiago Pontiroli, team lead for threat intelligence research at Acronis. "Requests that create urgency, that bypass normal approval flows, or that slightly deviate from established patterns are still the most consistent indicators. The manipulation works because it fits into a process that allows a single interaction to trigger action."

Stay up to date with the latest news. Follow KT on WhatsApp Channels.This isn't an isolated incident. In 2019, criminals used an AI-generated voice to impersonate a UK energy company CEO, convincing an executive to transfer $240,000. According to Deloitte, deepfake content on social media platforms grew 550 per cent between 2019 and 2023, driven by advancements in generative AI tools. The technology has moved from novelty to industrial-scale fraud.

Why the Middle East faces heightened risk

As the UAE accelerates digital adoption across government and private sectors, the attack surface expands. "Government-led digitalisation builds trust in online communications, which has the unfortunate side effect of making them easier to exploit," says Mortada Ayad, vice president for META at Delinea. "The push for speed and convenience creates opportunities for attackers to use urgency against users."

The region's reliance on platforms like WhatsApp for business communication compounds the problem. Once an attacker operates within a trusted channel, adding a voice message or short video increases credibility. "People often overlook the fact that deepfakes aren't limited to video," Ayad notes. "Cloned audio can be just as convincing."

Financial services and critical infrastructure face the highest exposure. A Medius survey from 2024 found that 53 per cent of finance professionals have been targeted by deepfake scams, and 43 per cent admitted falling victim. In sectors like oil and gas, AI-generated content purporting to show infrastructure under attack can trigger market panic.

"Infrastructure in sectors such as mining, logistics, transportation and urban management can be impacted by disinformation campaigns," explains Andrea Sorri, segment development manager for smart cities at Axis Communications. "At a time when geopolitical tensions are influencing business activity across the Middle East, manipulated video content can cause people, countries and markets to panic."

How to spot a deepfake

While AI-generated content has become increasingly sophisticated, tells remain. Maher Yamout, lead security researcher at Kaspersky, points to several visual and audio inconsistencies that even non-experts can identify.

Lighting and shadows often betray deepfake videos. A person may appear to be outdoors or near a window, yet their face is lit as though under studio lighting. Hairlines frequently show blurring or unnatural colour transitions. Blinking patterns are also revealing — people typically blink 10 to 20 times per minute, but deepfakes often blink too frequently or barely at all.

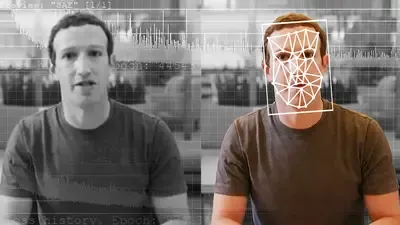

An example of a deepfake. Image Credit: CNBC

Audio deepfakes carry their own markers. Voices may sound unusually flat, lacking natural variation in tone. Real speech contains subtle imperfections — micropauses, breaths between phrases, the occasional cough or sniff. Synthetic voices either lack these nuances entirely or place them unnaturally.

But Ayad warns against relying solely on technical detection. While visual glitches may still exist today, they're fading fast. Behavioural signals such as unexpected requests, urgency, or pressure to act are better indicators. The safest response is always to pause and verify through a second, trusted channel.

The shift from systems to trust

Pontiroli emphasises that many deepfake incidents don't depend on breaking security controls. "What is changing is a shift away from trusting the interaction and toward validating the decision. Organisations are starting to treat any single communication, regardless of how convincing, as insufficient on its own for sensitive actions."

This requires structural changes. Organisations are implementing mandatory dual approval for high-value transactions, enforcing out-of-band verification through pre-registered contacts, and restricting changes to payment details without independent validation. "The common factor is removing the ability for any single channel, whether email, voice, or video, to directly trigger a financial or operational outcome," Pontiroli says.

Axis Communications has responded by integrating cryptographic signatures into video systems. "Signed video adds cryptographic signatures to captured video, collecting information from previous frames and signing it using a private encryption key," Sorri explains. "Users can then verify the information using that signature and the corresponding public key, ensuring end-to-end integrity of video data."

Regulatory response in the UAE

The UAE is beginning to address these risks through targeted measures. Just last week, the Central Bank prohibited banks from requesting sensitive documents over channels like WhatsApp. Last year, authorities introduced a five-point classification system indicating the level of AI involvement in content creation.

"While attackers won't adhere to these standards, measures like this help build public awareness and digital literacy, which remain one of the strongest lines of defence," Ayad says. "Ultimately, the shift needs to be from trusting what we see to verifying what we're asked to do."

Ayad argues that too many organisations still view deepfakes as a media issue when they are fundamentally an identity risk. "The priority should be verifying the legitimacy of requests, not just the content itself. People need to feel confident challenging unusual requests, regardless of who they appear to come from."

As deepfakes become cheaper and more accessible, the threat will intensify. The technology exists — the question is whether organisations can adapt their verification cultures faster than attackers can refine their tactics.

UAE firms warned of rising AI deepfake scams as cyber threats evolve UAE warns of 32% surge in digital identity attacks, urges stronger protection